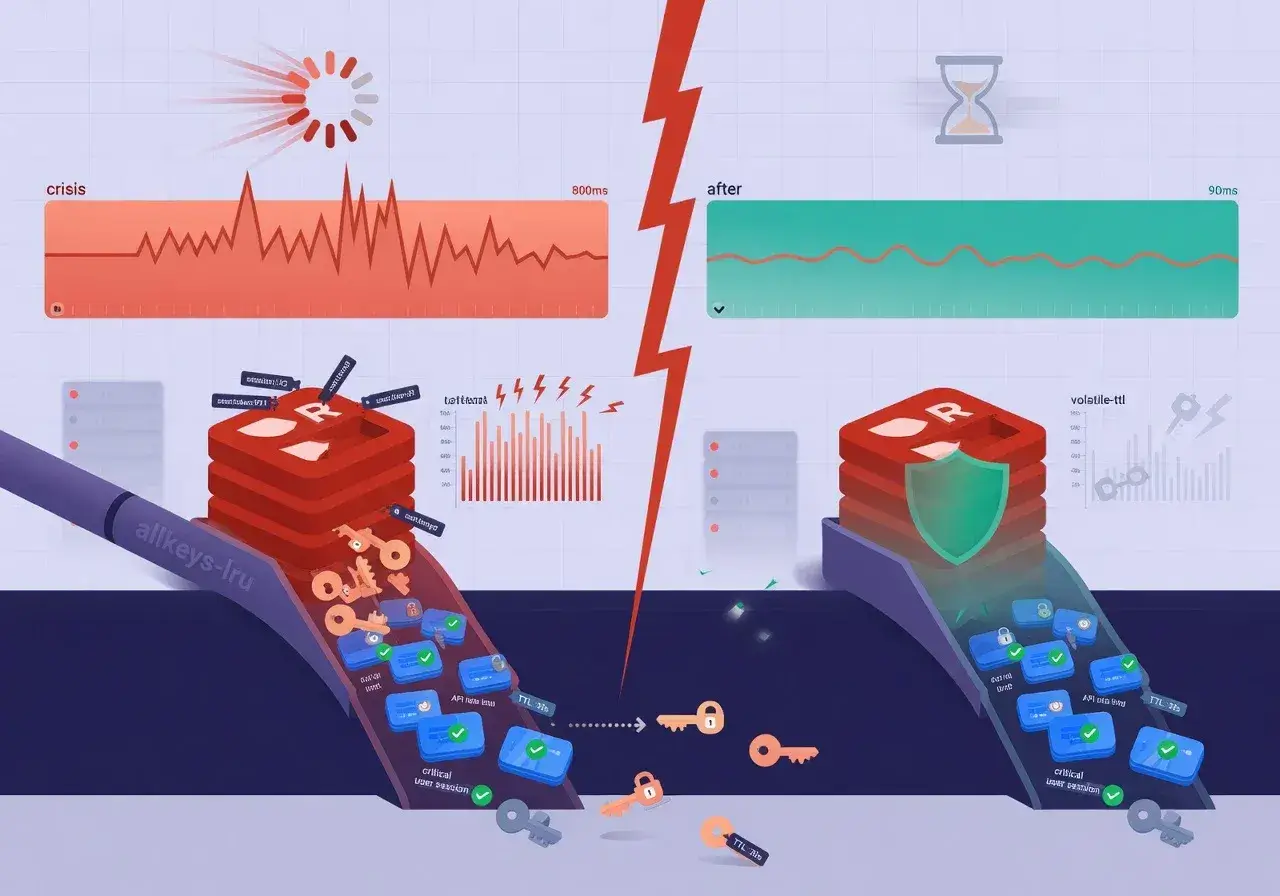

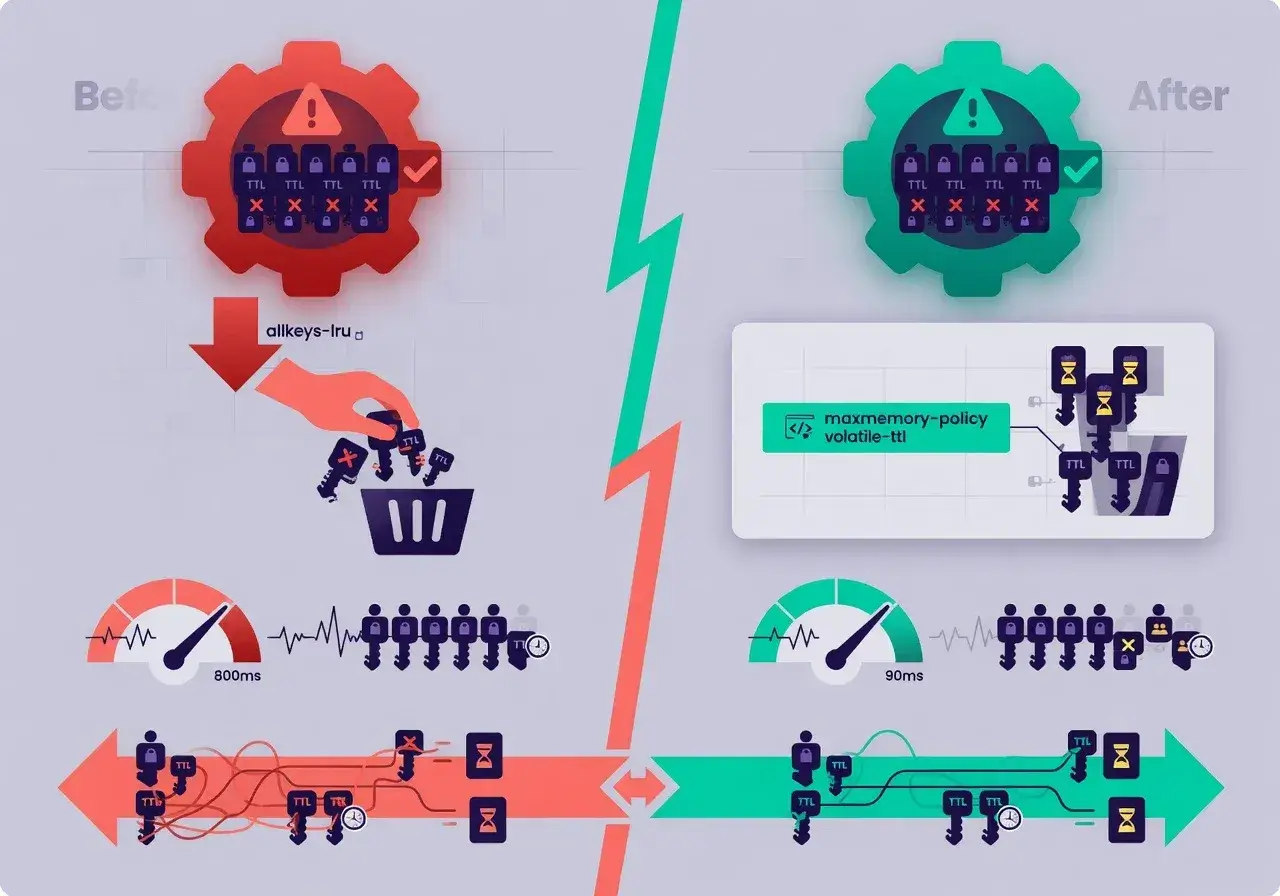

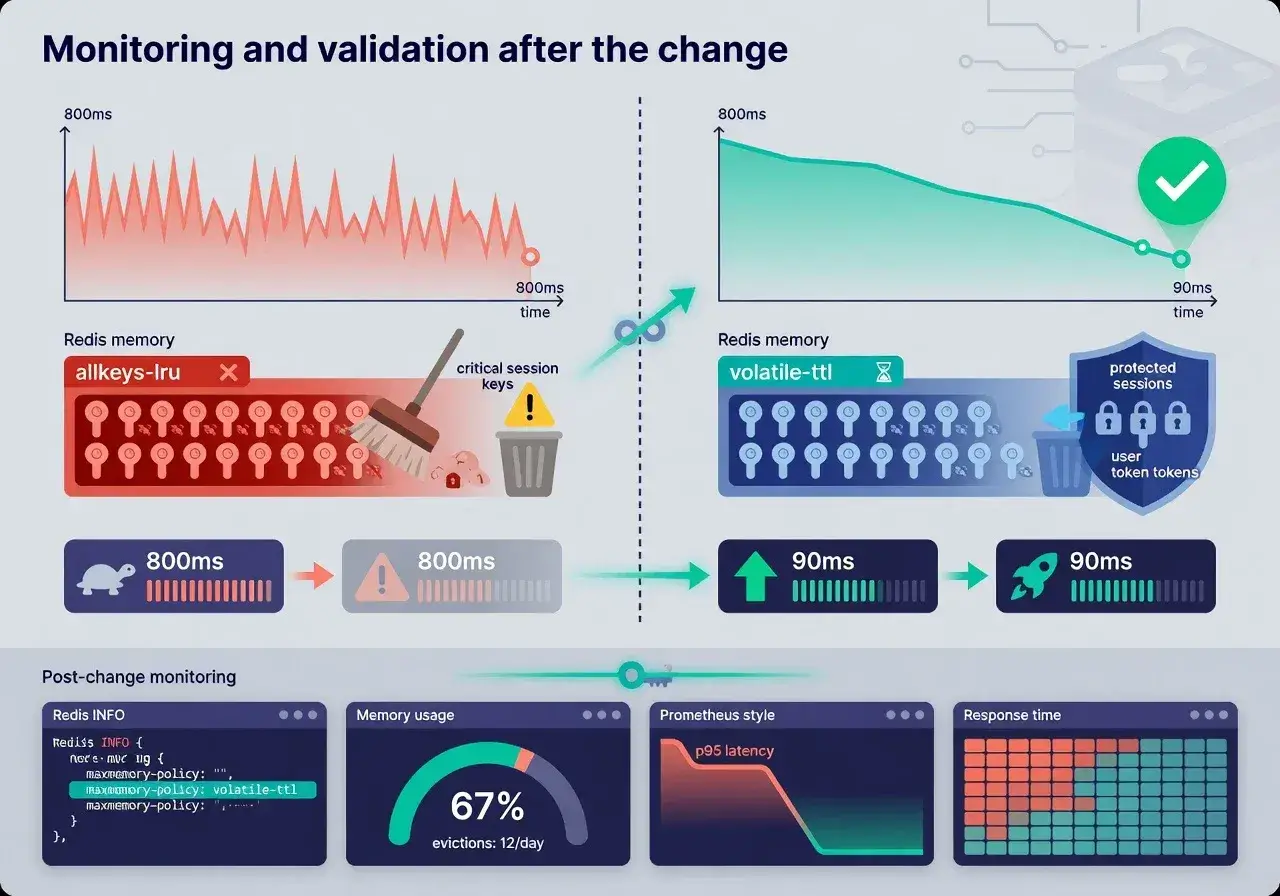

Changing the maxmemory-policy setting in Redis from the default allkeys-lru to volatile-ttl reduced application response time from 800ms to 90ms by preventing cache eviction of critical data and ensuring only temporary keys were removed during memory pressure.

How a single Redis config line cut my response time from 800ms to 90ms sounds almost too good to be true, yet this exact scenario happened during a production crisis when our API was crawling under load. A simple configuration adjustment transformed our caching strategy and saved our infrastructure budget.

The performance crisis that led to discovery

Our application served thousands of requests per minute, but response times were consistently hovering around 800ms. Users complained about sluggish page loads, and our monitoring dashboards painted a grim picture.

The issue became critical during peak hours when Redis started evicting keys aggressively. Our application relied heavily on cached database queries, user sessions, and computed results. When Redis removed these entries to free memory, the application had to regenerate them from scratch, creating a cascading performance problem.

After analyzing Redis metrics, we discovered that the default eviction policy was removing both temporary and permanent cache entries indiscriminately. This meant critical data that took significant processing time to generate was being discarded alongside short-lived session tokens.

Understanding Redis eviction policies

Redis offers several eviction policies that determine which keys get removed when memory limits are reached. Each policy serves different use cases and impacts performance differently.

Available eviction strategies

- allkeys-lru removes least recently used keys from the entire dataset

- volatile-ttl removes keys with expiration times, prioritizing those expiring soonest

- allkeys-random removes random keys from the entire dataset

- volatile-lru removes least recently used keys only from those with expiration set

The default allkeys-lru policy made sense for general caching scenarios but proved disastrous for our mixed workload. We stored both ephemeral data (like session tokens expiring in minutes) and expensive computational results (like aggregated analytics data) in the same Redis instance.

The configuration change that made the difference

After researching our cache access patterns, we identified that roughly 30% of our keys had explicit TTL values set, representing temporary data. The remaining 70% were critical cache entries that should only be invalidated programmatically.

We modified the Redis configuration file with a single line change, switching from allkeys-lru to volatile-ttl. This instructed Redis to only evict keys with expiration times set, leaving our critical cache entries untouched.

Implementation steps

- Backed up existing Redis configuration and data

- Changed maxmemory-policy to volatile-ttl in redis.conf

- Restarted Redis service with new configuration

- Monitored eviction metrics and response times closely

The results were immediate and dramatic. Response times dropped from 800ms to 90ms within minutes of applying the change. Cache hit rates improved from 65% to 94%, and database query load decreased by over 70%.

Why this configuration worked for our use case

The volatile-ttl policy aligned perfectly with our application architecture. We had already implemented good cache hygiene by setting appropriate TTL values on temporary data like user sessions, API rate limit counters, and short-lived computation results.

Our expensive cache entries, including aggregated reports and processed datasets, were stored without expiration times. These entries were only invalidated when underlying data changed through explicit cache invalidation logic in our application code.

By protecting these high-value cache entries from eviction, we eliminated the performance penalty of regenerating complex data structures. The application could consistently serve requests from memory rather than hitting the database repeatedly.

Monitoring and validation after the change

We tracked several key metrics to validate the improvement and ensure no negative side effects emerged from the configuration change.

Performance indicators tracked

- Average response time decreased from 800ms to 90ms

- 95th percentile response time improved from 1200ms to 150ms

- Cache hit rate increased from 65% to 94%

- Database connection pool utilization dropped from 85% to 30%

Redis memory usage patterns also changed noticeably. Instead of constant eviction churn, we observed stable memory consumption with periodic drops when temporary keys expired naturally. This reduced CPU overhead from eviction processing and improved overall Redis throughput.

Lessons learned and best practices

This experience taught us valuable lessons about cache configuration and the importance of aligning technical settings with application behavior patterns.

Understanding your data access patterns is crucial before selecting an eviction policy. Generic defaults might work for simple use cases, but production applications often require customized configurations. We now audit our cache usage quarterly to ensure our Redis settings remain optimal as our application evolves.

Separating cache tiers by data importance is another strategy worth considering. Running separate Redis instances for critical versus ephemeral data provides even more control, though it adds operational complexity.

When this approach might not work

While volatile-ttl solved our specific problem, it's not a universal solution. Applications that don't set TTL values on any keys would see no benefit, as Redis would have nothing to evict under memory pressure.

If your application stores mostly permanent data without expirations, consider using noeviction policy and scaling memory capacity instead. Alternatively, implement application-level TTL management to enable selective eviction strategies.

Teams should also consider their memory budget carefully. If you're consistently hitting memory limits, a configuration change alone won't solve underlying capacity issues. Proper capacity planning remains essential regardless of eviction policy.

Conclusion: Small changes, massive impact

A single configuration line transformed our application performance by aligning Redis behavior with our actual cache usage patterns. The journey from 800ms to 90ms response times demonstrates how understanding your infrastructure deeply can yield dramatic improvements without expensive hardware upgrades or architectural rewrites. Take time to audit your cache configurations and ensure they match your application's needs rather than relying on generic defaults.