Most backend developers unknowingly sacrifice up to 40% of their application's performance by neglecting proper database indexing strategies, leading to slower queries, increased server load, and frustrated users who eventually abandon poorly optimized systems.

The database mistake that costs most backend developers 40% in performance isn't about choosing the wrong database technology or using outdated hardware. It's about something far simpler yet devastatingly common: failing to implement proper indexing strategies from the start. Many developers rush through initial development phases, focusing on functionality while ignoring how their database queries will scale. This oversight becomes painfully obvious once applications grow beyond a few thousand records, transforming what should be millisecond queries into multi-second nightmares that drain resources and patience alike.

Why indexing matters more than you think

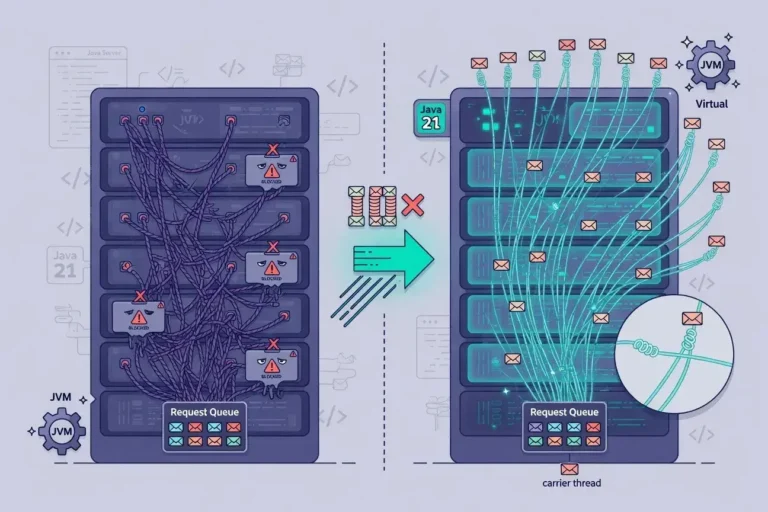

Database indexes function like a book's table of contents, allowing the system to locate information without scanning every page. Without them, your database performs full table scans for every query, examining each row individually until finding matches.

The performance impact becomes exponential as data grows. A table with 100 rows might not show noticeable slowdowns, but once you reach 100,000 or a million records, unindexed queries can take seconds instead of milliseconds. This degradation affects everything downstream: API response times increase, user interfaces lag, and server resources get consumed unnecessarily.

The real cost of missing indexes

- Query execution times increase from milliseconds to several seconds

- Database server CPU usage spikes during peak traffic periods

- Memory consumption grows as the database attempts to cache entire tables

- Application scalability becomes severely limited regardless of infrastructure investments

Understanding these consequences helps developers prioritize indexing as a fundamental architecture decision rather than an optimization afterthought.

Common indexing mistakes developers make

Beyond simply forgetting to add indexes, developers frequently make strategic errors that undermine performance gains or create new problems.

Over-indexing every column

Some developers overcompensate by indexing every column, which creates its own performance issues. Each index requires storage space and must be updated whenever data changes, slowing down INSERT, UPDATE, and DELETE operations. The database query optimizer can also become confused when too many index options exist, sometimes choosing suboptimal execution plans.

Ignoring composite indexes

Single-column indexes work well for simple queries, but many applications filter or sort by multiple columns simultaneously. Composite indexes covering multiple columns in the correct order can dramatically improve these queries, yet developers often overlook this optimization strategy.

The key lies in balancing read performance against write overhead while understanding your application's actual query patterns through careful analysis and monitoring.

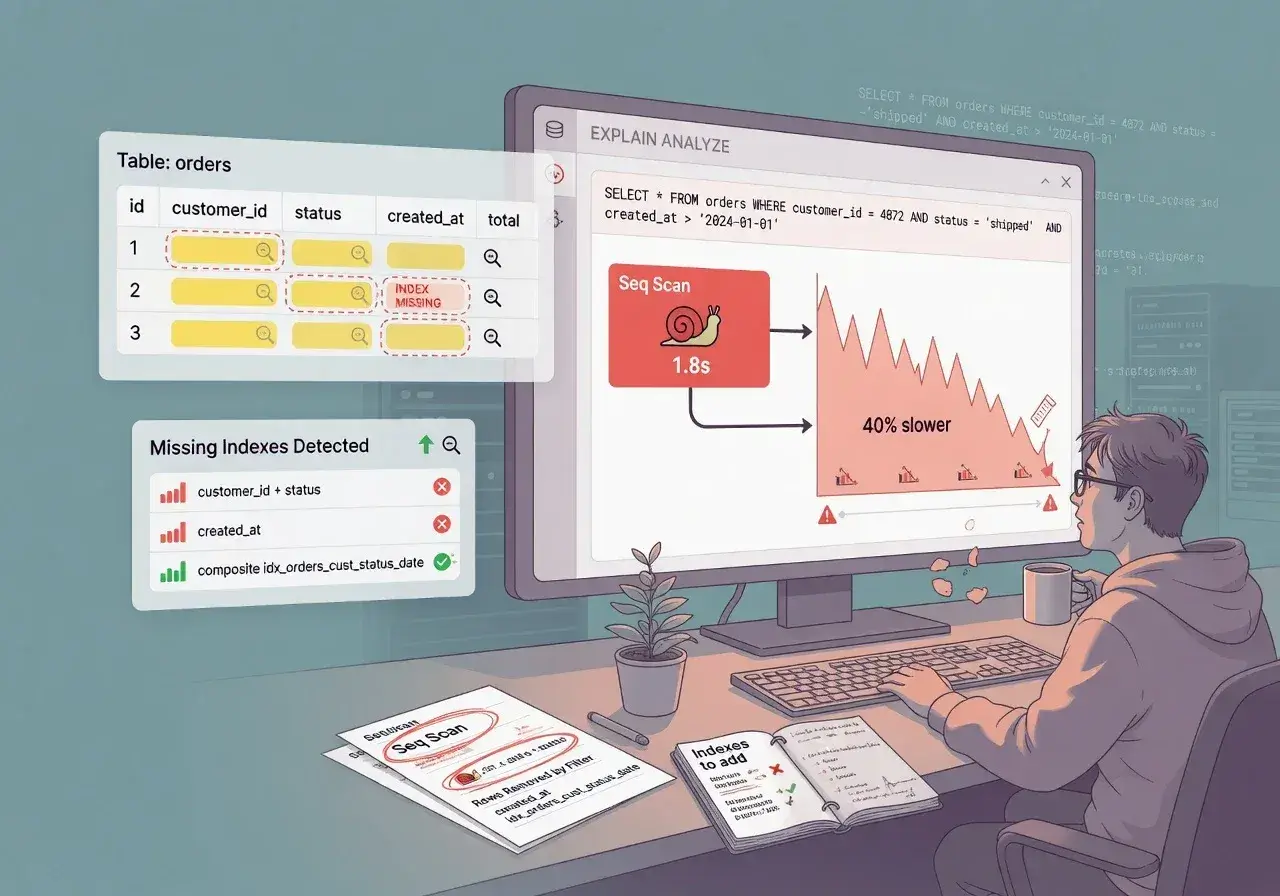

How to identify missing indexes in your database

Most database systems provide tools to expose performance bottlenecks caused by missing indexes. Learning to use these diagnostic features transforms guesswork into data-driven optimization.

PostgreSQL offers the pg_stat_statements extension, which tracks query execution statistics. MySQL provides the slow query log and EXPLAIN command to analyze execution plans. These tools reveal which queries consume the most time and resources, highlighting where indexes would provide maximum benefit.

Analyzing query execution plans

- Look for "table scan" or "sequential scan" operations in execution plans

- Identify queries with high execution counts and long average durations

- Check for queries that examine thousands of rows to return just a few results

Regular monitoring of these metrics helps catch performance issues before they impact users, allowing proactive optimization rather than reactive firefighting.

Strategic indexing for maximum performance gains

Effective indexing requires understanding your application's query patterns and prioritizing the most impactful optimizations first.

Start by indexing foreign key columns used in JOIN operations, as these queries often dominate application workloads. Next, focus on columns frequently used in WHERE clauses, especially those with high selectivity that filter data significantly. Finally, consider columns used in ORDER BY and GROUP BY operations, which can benefit from index-based sorting.

Index maintenance considerations

Indexes require ongoing maintenance to remain effective. Database systems can experience index fragmentation over time, reducing performance benefits. Scheduling regular index rebuilds or reorganizations keeps them optimized, though the specific approach depends on your database platform and workload characteristics.

Balancing these maintenance tasks against production workloads requires careful planning to avoid impacting user-facing operations during peak usage periods.

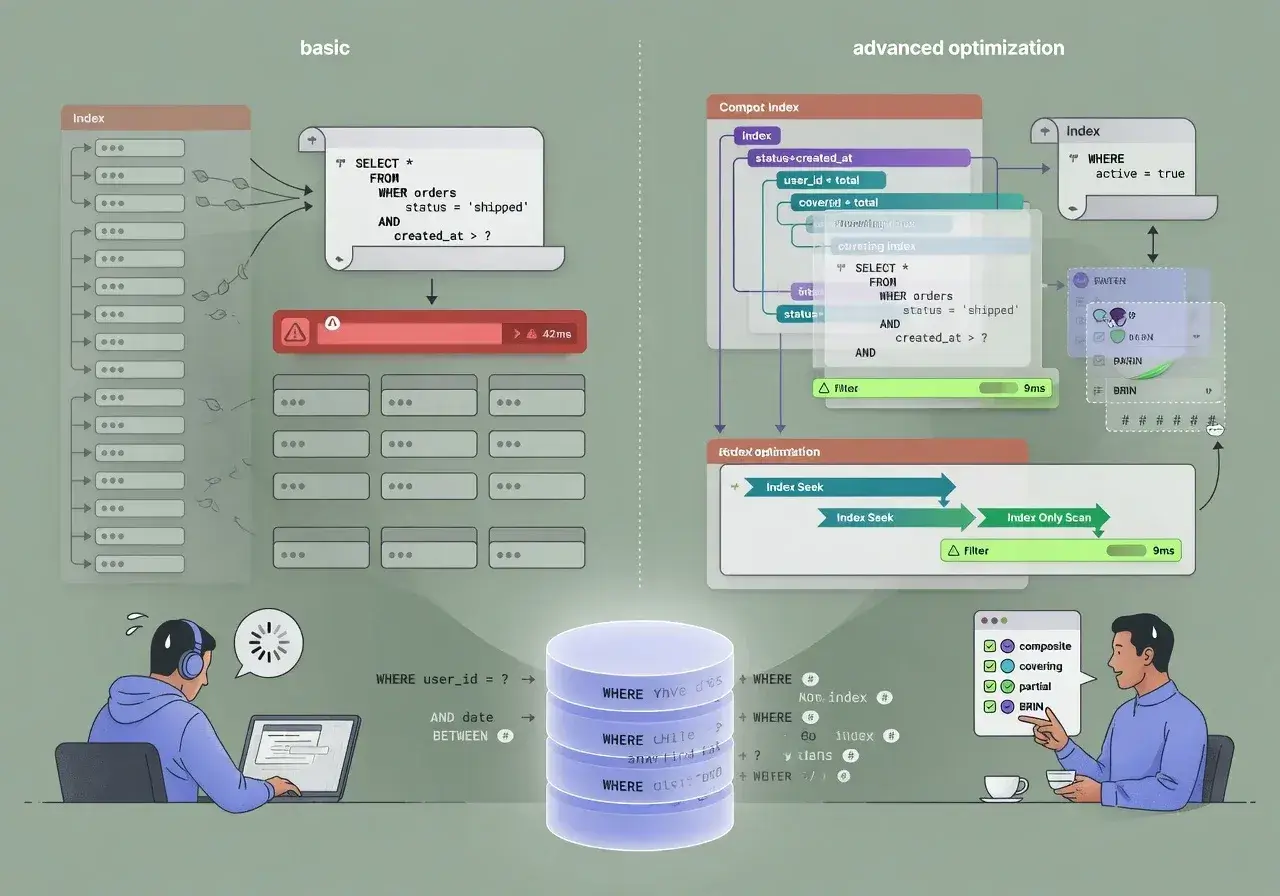

Beyond basic indexes: advanced optimization techniques

Once basic indexing is in place, several advanced strategies can squeeze out additional performance improvements.

Partial indexes for specific conditions

Partial indexes cover only rows meeting specific criteria, reducing index size while maintaining performance for targeted queries. For example, indexing only active users rather than including deleted accounts can significantly reduce storage requirements and improve cache efficiency.

Covering indexes for query optimization

Covering indexes include all columns needed by a query, allowing the database to satisfy requests entirely from the index without accessing the table data. This technique eliminates additional I/O operations, providing substantial performance improvements for frequently executed queries.

These advanced techniques require deeper understanding of database internals but can deliver remarkable performance gains when applied appropriately to high-impact queries.

Measuring the real impact of proper indexing

Implementing indexes without measuring their impact leaves you guessing whether optimizations actually improved performance or simply shifted bottlenecks elsewhere.

Establish baseline metrics before making changes: query execution times, database CPU usage, memory consumption, and application response times. After adding indexes, compare these metrics to quantify improvements and identify any unexpected side effects.

Many developers discover that properly indexed databases not only respond faster but also handle significantly more concurrent users with the same hardware resources, effectively multiplying infrastructure value without additional costs.

Conclusion: making indexing a development priority

The 40% performance cost of neglecting database indexing isn't inevitable. By understanding indexing fundamentals, identifying missing indexes through systematic analysis, and implementing strategic optimizations, developers can dramatically improve application performance. Making indexing a priority during initial development rather than an afterthought during crisis management prevents performance problems before they impact users. The time invested in proper database design pays dividends throughout an application's lifetime, delivering faster responses, lower infrastructure costs, and better user experiences that distinguish professional applications from amateur implementations.