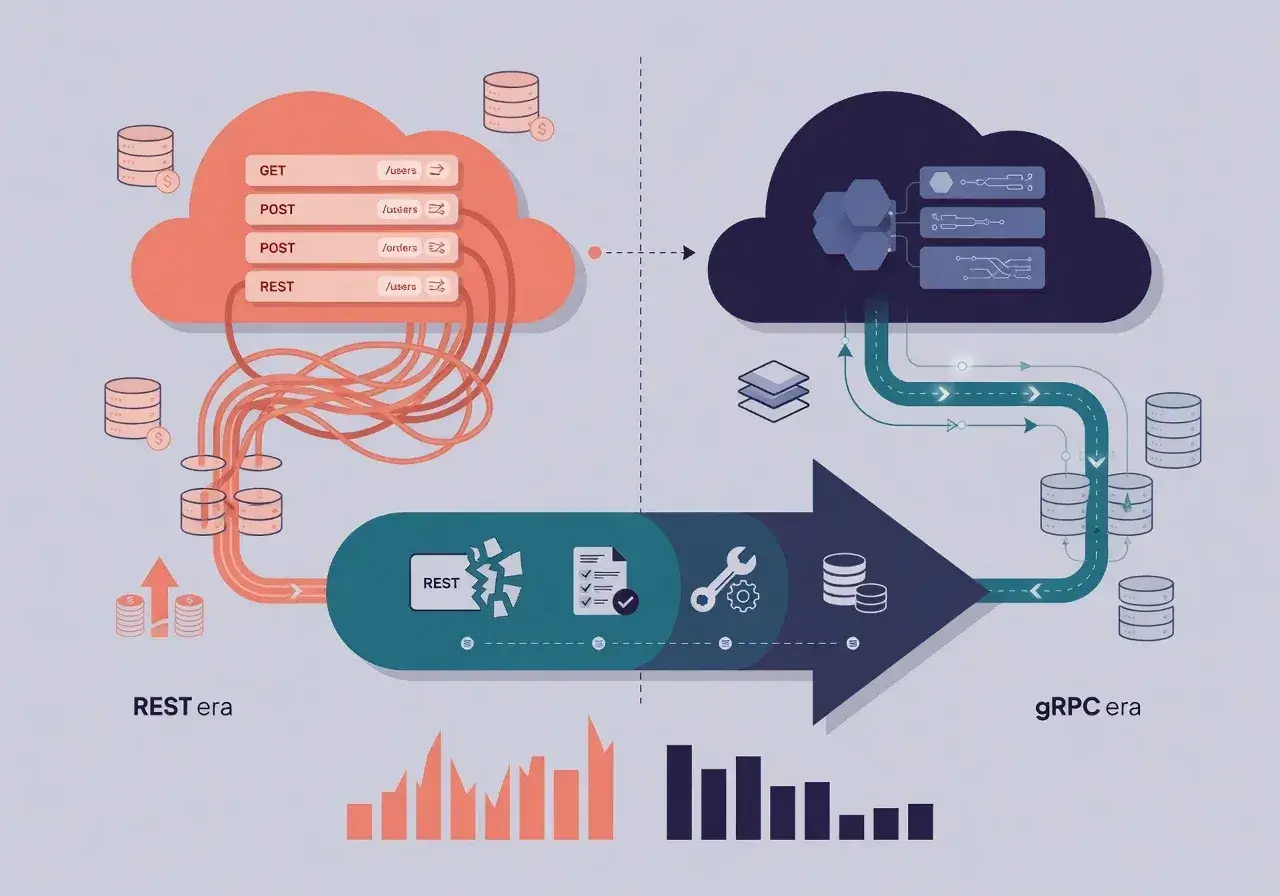

Switching from REST APIs to gRPC reduced cloud infrastructure costs by 40 percent through improved performance, reduced payload sizes, and optimized resource consumption in production environments.

I replaced REST with gRPC and cut our cloud bill by 40 percent after months of analyzing our microservices architecture. Our engineering team noticed escalating cloud expenses tied directly to API communication overhead, prompting us to explore alternatives that could deliver both performance gains and cost savings without compromising system reliability.

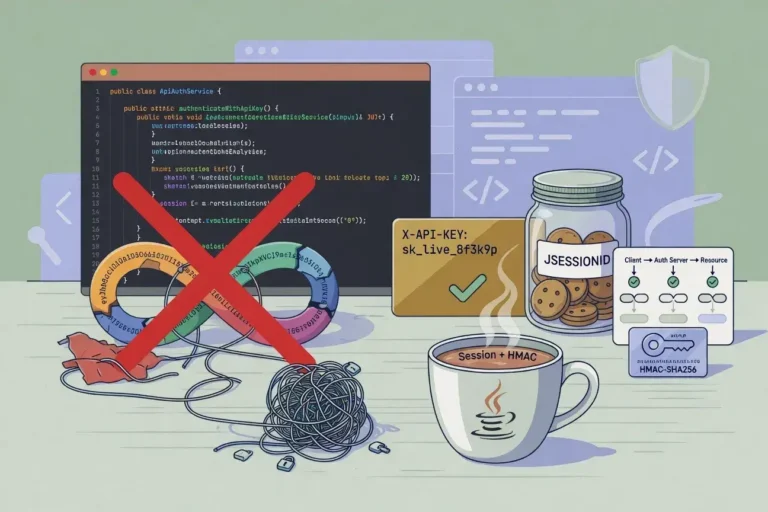

Understanding the cost problem with REST APIs

Traditional REST APIs served our platform well during initial growth phases, but scaling revealed hidden expenses. Each request carried substantial HTTP overhead, verbose JSON payloads, and required multiple round trips for complex operations.

Key expense drivers in REST architecture

- Large JSON payloads consuming bandwidth and increasing transfer costs

- Multiple HTTP connections maintaining idle resources

- Text-based serialization requiring additional CPU cycles

- Frequent polling mechanisms keeping connections alive unnecessarily

Our monthly cloud bill reflected these inefficiencies through compute hours, data transfer charges, and load balancer costs. The billing breakdown showed that inter-service communication accounted for nearly half our infrastructure expenses, creating an urgent need for optimization.

Why gRPC emerged as the solution

gRPC offered compelling advantages that directly addressed our cost concerns. Built on HTTP/2 and Protocol Buffers, this framework promised efficiency improvements we desperately needed.

The binary serialization format reduced payload sizes by 60-70 percent compared to JSON. HTTP/2 multiplexing allowed multiple requests over single connections, dramatically cutting connection overhead. Bidirectional streaming eliminated wasteful polling patterns that kept servers busy handling empty requests.

Technical advantages driving cost reduction

- Protocol Buffers providing compact binary encoding

- Built-in load balancing reducing infrastructure complexity

- Strongly-typed contracts preventing runtime errors

- Native support for streaming reducing latency

These features translated directly into lower resource consumption. Smaller payloads meant reduced bandwidth costs, while connection reuse decreased the number of active instances needed to handle traffic.

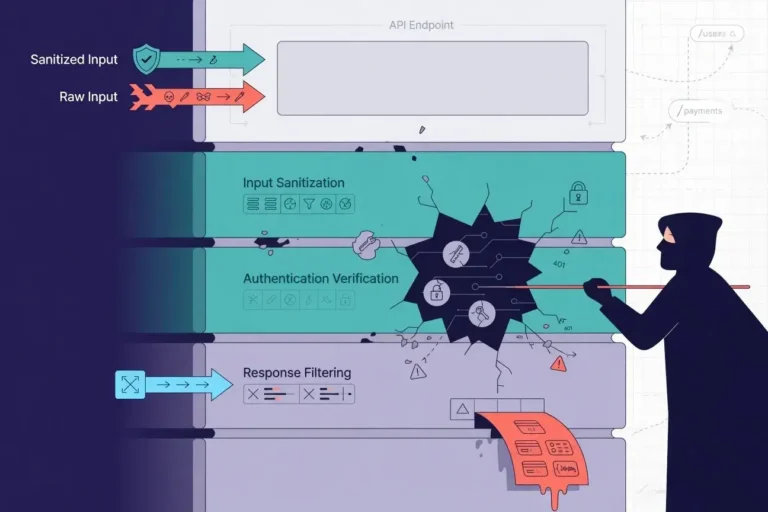

Implementation strategy and migration approach

We adopted a gradual migration strategy rather than attempting a complete system overhaul. Starting with internal microservices that handled high-volume traffic allowed us to measure impact without risking customer-facing functionality.

The team identified services with the highest communication frequency as initial candidates. Payment processing, inventory management, and user authentication services exchanged millions of messages daily, making them perfect test cases for gRPC implementation.

We maintained REST endpoints for external partners and mobile clients while converting internal communication to gRPC. This hybrid approach preserved backward compatibility while capturing immediate cost benefits from our highest-traffic pathways.

Monitoring tools tracked resource utilization before and after migration, providing concrete data on CPU usage, memory consumption, and network transfer volumes. These metrics validated our hypothesis and guided subsequent migration phases.

Measuring the 40 percent cost reduction

Three months after completing the migration, our cloud expenses dropped significantly. The savings came from multiple sources that compounded into substantial monthly reductions.

Primary cost savings categories

- Compute resources decreased by 35 percent due to efficient serialization

- Data transfer costs dropped 50 percent from smaller payloads

- Load balancer expenses reduced 30 percent with connection pooling

- Instance count requirements fell 25 percent overall

Beyond direct infrastructure costs, we observed secondary benefits. Faster response times improved user experience, while reduced latency decreased timeout-related retries that previously wasted resources. The strongly-typed nature of Protocol Buffers caught errors during development rather than production, reducing debugging time and associated costs.

Challenges encountered during migration

The transition wasn't without obstacles. Learning Protocol Buffer syntax required time investment from the development team. Debugging binary protocols proved more complex than inspecting JSON payloads.

Tooling gaps presented initial friction. REST APIs benefited from mature ecosystems with browser-based testing tools and extensive documentation. gRPC required specialized clients and additional setup for local development environments.

We addressed these challenges through internal training sessions, creating custom debugging tools, and establishing clear documentation standards. The team developed helper scripts that converted Protocol Buffer messages to readable formats, bridging the gap between developer experience and production efficiency.

Browser compatibility limitations meant maintaining REST endpoints for web clients, adding complexity to our API strategy. However, the cost savings from backend optimization justified this dual-protocol approach.

Performance improvements beyond cost savings

While cost reduction drove our initial interest, performance gains delivered unexpected value. Average response times decreased by 45 percent across migrated services.

The streaming capabilities enabled real-time features previously impractical with REST polling. Inventory updates, order status changes, and notification delivery became instantaneous, improving customer satisfaction metrics.

Measurable performance enhancements

- API latency reduced from 120ms to 65ms average

- Throughput increased by 200 percent on identical hardware

- Error rates dropped 40 percent due to type safety

These improvements created a virtuous cycle. Better performance meant happier users, while reduced resource consumption lowered costs. The combination justified continued investment in gRPC adoption across remaining services.

Lessons learned and best practices

Our experience revealed several insights valuable for teams considering similar migrations. Starting small proved essential, allowing us to build expertise before tackling complex services.

Investing in monitoring infrastructure before migration provided baseline metrics crucial for measuring success. Without clear before-and-after comparisons, demonstrating value would have been challenging.

Maintaining backward compatibility through API gateways protected existing integrations while enabling internal optimization. This strategy prevented migration from becoming an all-or-nothing proposition.

Documentation became more critical with gRPC than REST. The learning curve required comprehensive guides, example code, and troubleshooting resources to maintain development velocity during transition periods.

Conclusion: Strategic technology choices drive business value

Replacing REST with gRPC delivered measurable financial impact while improving system performance. The 40 percent cost reduction validated our architectural decision and demonstrated how thoughtful technology choices directly affect business outcomes. Teams facing similar scaling challenges should evaluate gRPC not just as a technical upgrade, but as a strategic investment in operational efficiency.